The Architect's

co-pilot.

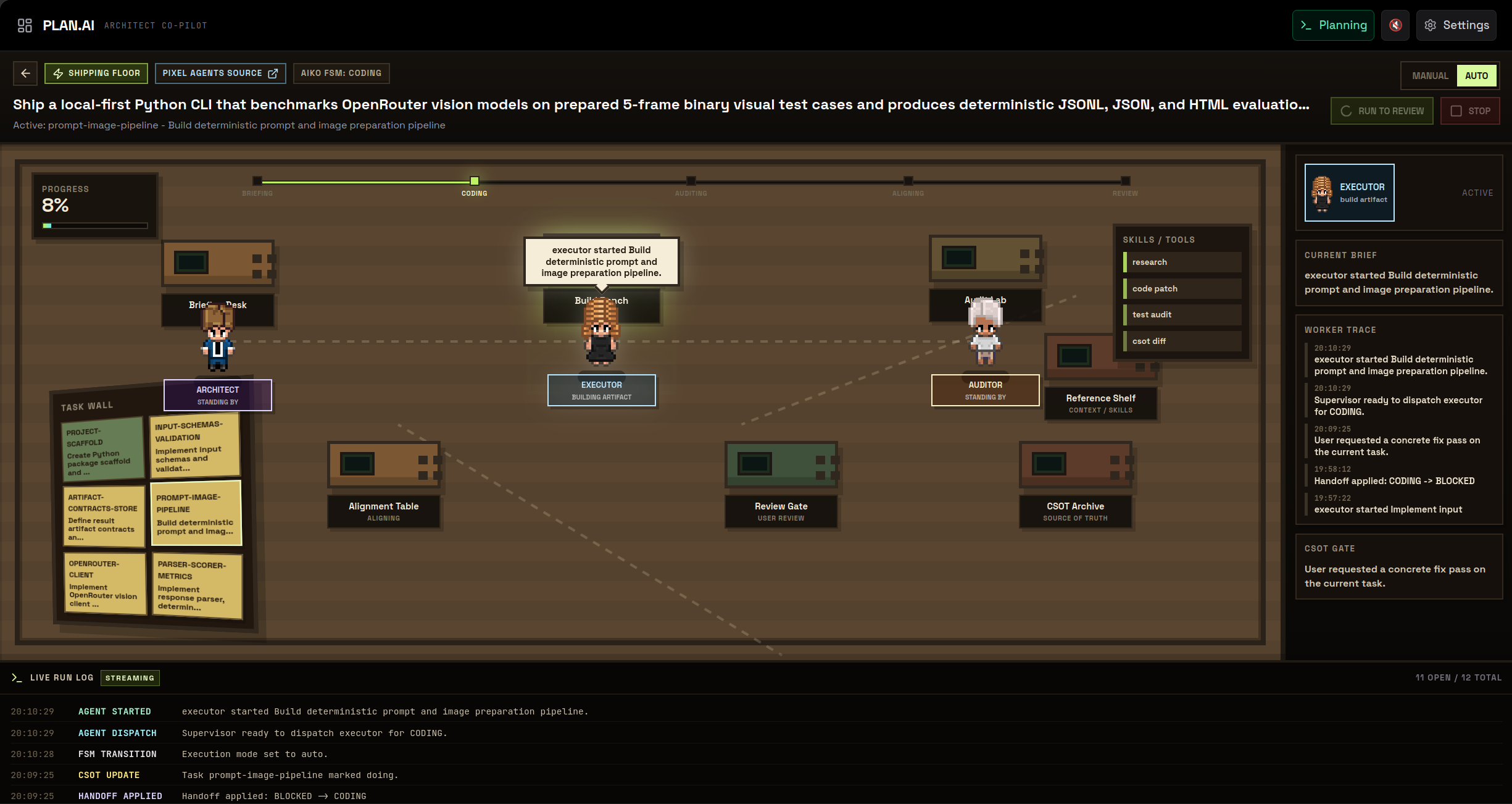

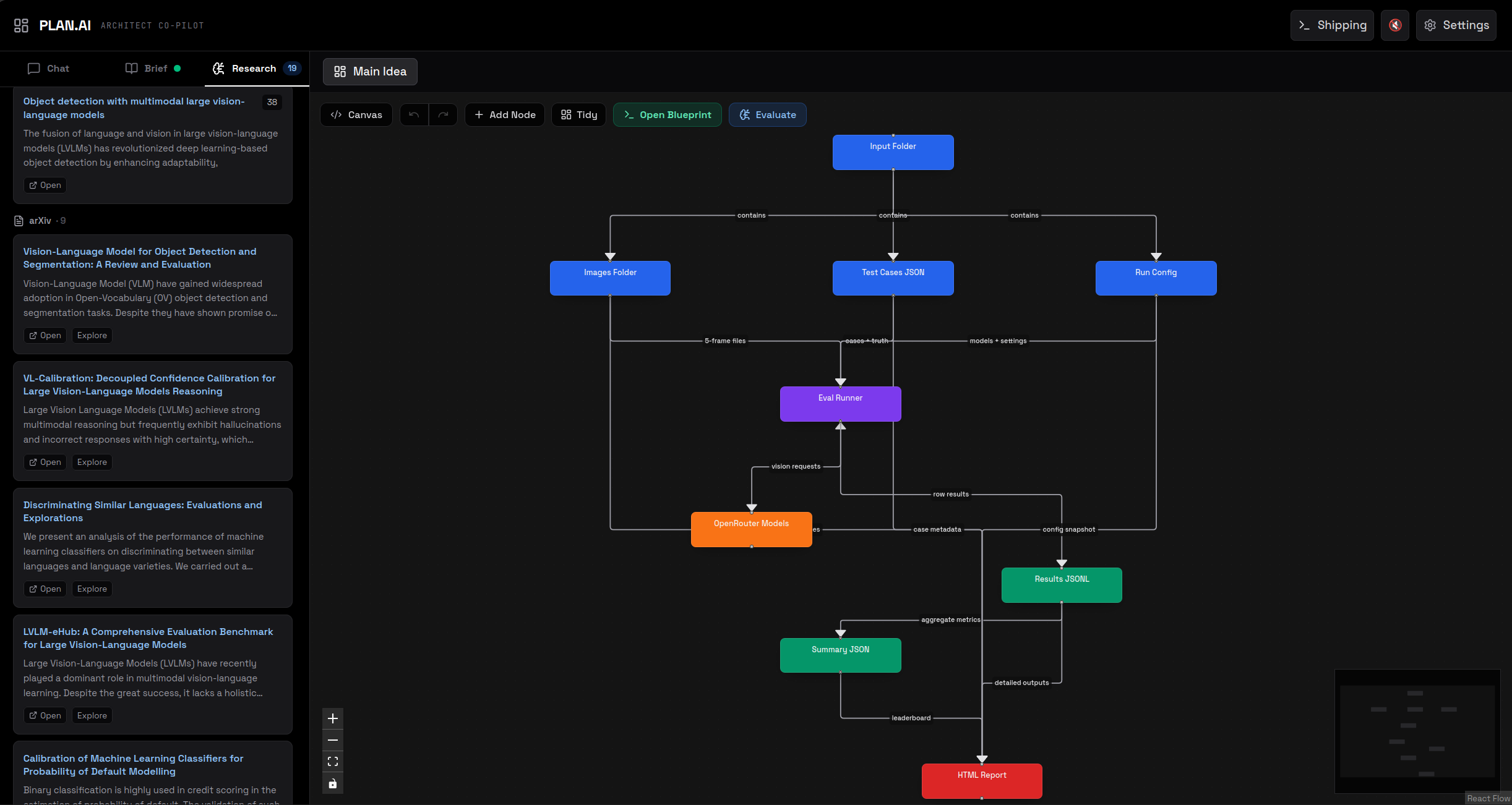

PLAN.AI takes a one-line idea and returns a citation-grounded technical blueprint in 22 sections, a Mermaid C4 diagram, and a Shipping Floor that compiles the blueprint into Architect / Executor / Auditor tasks. Underneath: nine source integrations, a three-depth research engine including a STORM-lite multi-persona orchestrator, and a Vercel-ready deploy where every API key is supplied client-side.